Who should care about Autonomous Vehicles?

Everyday, I read about more wonderful technologies that will save our lives tomorrow. The Google car will replace drivers, road accidents will disappear and insurance will not be needed.

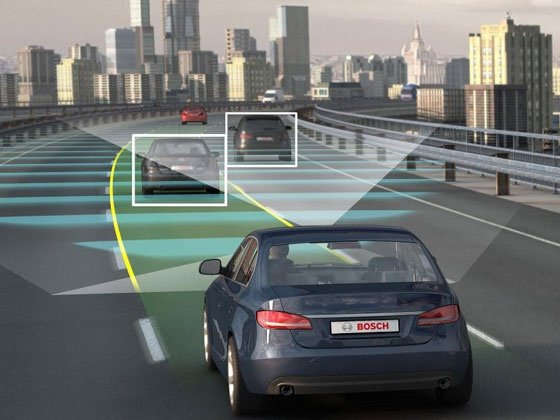

To make this a reality there are new radars, Lidars, infra-red scanners, A-GNSS, binocular visions, the ability to communicate with traffic systems and other cars at extremely high speed, and even inductive charging from the road…

So with faster reaction times and consistent algorithmic decisions we are told these autonomous vehicles could substantially lower the accident rate. Proponents of autonomous vehicles point out there are about 32,000 traffic deaths per year in the U.S…

The question is, what of all that is actually real today and what should insurers monitor more closely?

Google says its driver-less cars have collectively driven more than 800,000 km without crashing. That’s maybe impressive but in terms of collecting data, Insurers have gone a bit further. For instance, Direct line has collected 87 million Km of telematics data to date with its black box and self installed devices.

A Lloyds report suggested that “Lower claims would be expected to result in lower premiums, and tighter profit margins. Some might argue that if cars really do become crash-less, there may not even be a need for motor insurance.”

Yet it also stated that the complicated risk profile of the technology and the potential for large losses meant that insurers would have to be involved in regulatory negotiations and the development of new and existing insurance products if the technology was to become commercially viable.

Since insuring the driver is not an option, are the insurers going to be pushed to pure product liability coverage?

Maybe not yet, there are clearly risks associated to autonomous vehicles today. These are very complex and immature systems prone to software bugs, hardware failures, inaccurate maps, improper maintenance and unforeseen situations no algorithm can handle.

In October 2013, Michael Barr, a software expert, testified in a landmark legal case in Oklahoma that a driver could lose control of the accelerator due to a software glitch if it was not reliably detected by any fail-safe backup system. The jury found the OEM liable for the death caused in the accident produced by the glitch.

It may be early days but so far the systems are not faultless and as potential losses may be high, a commercial market for autonomous and unmanned technology is unlikely to evolve unless insurance is available, says Lloyd’s, adding: “Autonomous and unmanned vehicles are a big unknown in many ways, and by careful scrutiny and mitigation of new risks, insurers can assist in the groundwork for adequate regulation.”

Clearly, some element of risk will remain with the car owner for a while, a long while even. And the car manufacturers are the first to agree. The research actually done on autonomous vehicles by the motor industry is absolutely not focussed on the robot taxi that Google is working on.

As part of the AdaptIVe programme, OEMs such as Volkswagen spoke at the recent ITS World congress in a vastly overcrowded back room of the event – a sign that even the organisers had not realised the full impact of the conversation on the ITS industry.

What transpires from the session was that Google is blinding us with its impossibly expensive equipment, creating a vehicle that might be used at best in controlled environments, while the actual car manufacturers are working on degrees of automation to assist the driver and prevent accidents. Google lasers scanners and other instruments are very expensive, OEMs are working with much cheaper ones and aim at mass market introduction by 2020.

It is important at this stage to explain the SAE Levels of Automation. They are a classification of the features in the vehicle bringing increasing level of autonomy to the vehicle system and the appropriate level of intervention and supervision needed by the driver.

For example, lane departure warning is level zero. The driver is in full control and the warning can be overridden. Level 5 are the fully autonomous vehicles Google is working on.

What really matters are the features at the levels in between. Cruse control is Level 1 (Driver Assistance): it requires full driver supervision and intervention.

Automated parking, autonomous emergency braking and dynamic stability control are example of level 2 (Partial Automation). The driver is expected to perform all remaining aspects of the driving task. This is the level to which the recent change to the UN Convention on Road Traffic (1968 Vienna Convention) were made.

Level 3 – or more precisely between 2 and 3 is where the liability issue start. This is Conditional Automation. All aspects of the driving task are taken over by the system with the expectation that the driver will respond appropriately to a request to intervene.

Traffic jam chauffeur is Level 3 – here supervision is not 100% necessary but the driver is expected to react to an emergency so its attention is needed at all time. Dozing off in slow traffic while the car is driving for you is expected and one of the issues being addressed by AdaptIVe.

The level 4 (High Automation) removes the necessity of the human driver to intervene but is used only in controlled condition such as specific road types. So the example given by Elon Musk in the recent presentation of the Tesla D, that the new car can get out of the garage by itself, is level 4 automation. Level 5 is on all roads.

Today the work done by programmes such as AdaptIVe is concentrating on the level 2 and 3 and the human response to slowly increasing levels of automation together with their legal repercussions. These steps are not separated or independent; it is clear that the jump between the different automation levels will be made dynamically based on the environment and other predetermined factors. So the same vehicle will use different levels of automation on private property or in slow traffic.

Back to our liability issue, this dynamic level of automation makes it impossible to know how much testing is needed since the number of cases is unlimited and can’t be considered entirely. The – even partly – autonomous car might be driving well but other cars might still crash into it. What is the effect of the driver being at the wheel but his reaction time lowered by drowsiness, work or alcohol? What is the effect on the long term loss of skills and behaviour change from using automated vehicle? If cars have different levels of automation, how will a driver react when using a new car with different priority requests? All these issues and the liability element they come with are not yet solved.

There are also many technical issues to work out such as the lack of high definition ADAS map – promised for the last 10 years – to name just one.

So is it a case of wait and see? Not exactly, the presentation of the Tesla I referred to earlier was a big stone thrown into the pool.

The car is offering adaptive cruise control, lane keeping on freeways, speed limit sign and traffic light recognition, detection of other cars or objects around the vehicle and emergency breaking.

The Tesla can also park itself automatically without the driver at the wheel. The sensors Tesla used are a forward-looking radar, a camera with image recognition, as well as a 360-degree ultrasonic sonar. The last element is the integration with GPS data and real-time traffic information. Tesla’s launch presentation can be seen here [link]

If the driver makes a steering wheel move that seems dangerous it will have a tactile feedback while still being able to overpower it. All these crazy futuristic feature are in a car on sales today. Whether or not they create a safer car is yet unknown so the potential safety benefit will not translate in insurance premium discount. It will be interesting to see who underwrite the Tesla D and for how much. Insurance coverage for the crash-proof Tesla S or Mercedes S class today in the US costs between $1000 and $4000 per year apparently… clearly not because or despite the safety features but yes, quite a lot more than for all the crash-ready cars out there. So it seems the autonomous vehicle could have an impact on the insurance market after all, in the short term a very positive one because it is pushing the vehicle price up while the risk benefits are not measurable.

In the medium term, it is the fleet management system providers than need to look at autonomous safety functions becoming standards in commercial fleet. What effect would this have on driving behavior management and all those third party black boxes vendors? Further down the line, could eCall or SVR become obsolete? I am not sure but I really want to see a stolen vehicle recovery system than involves the car driving back to its owner.

To learn more about the Future of Autonomous Vehicles, review our in-depth market research reports on Autonomous Vehicles.